Here's how it works

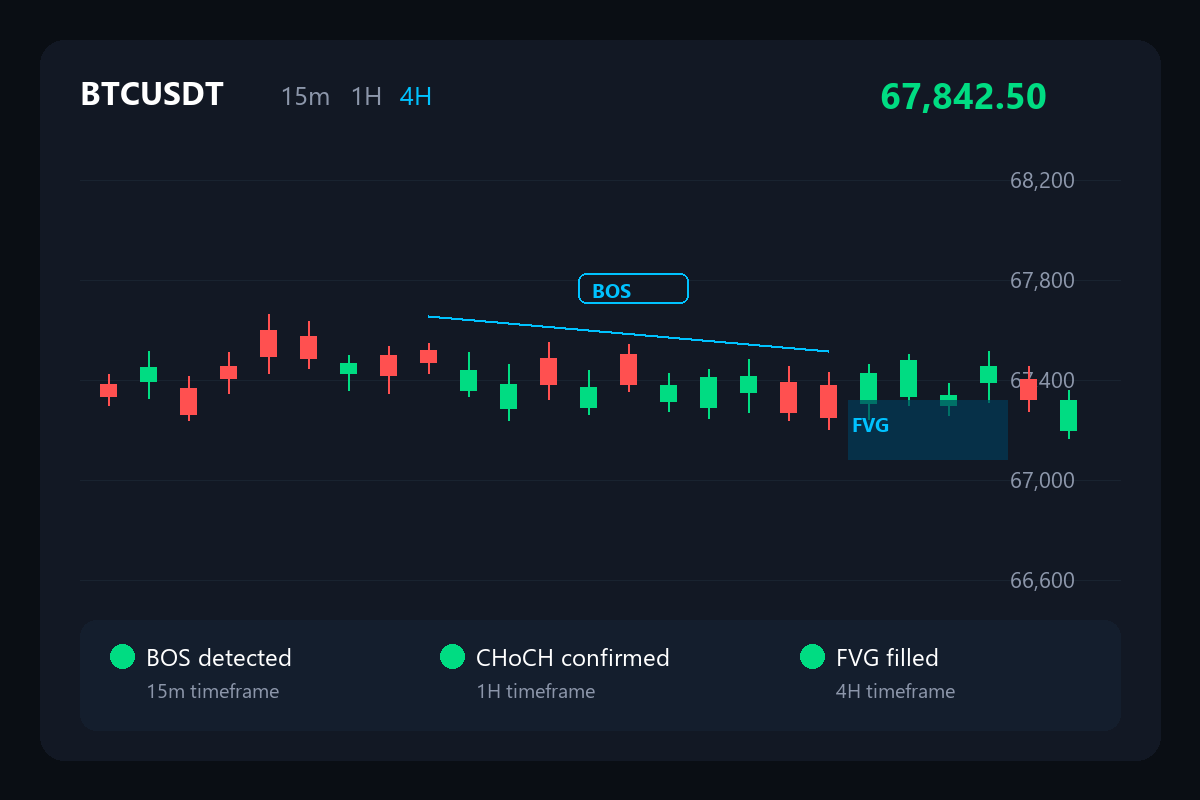

Detect the edge

Multi-modal vision AI scans charts across timeframes while pattern detectors identify high-probability setups in real time.

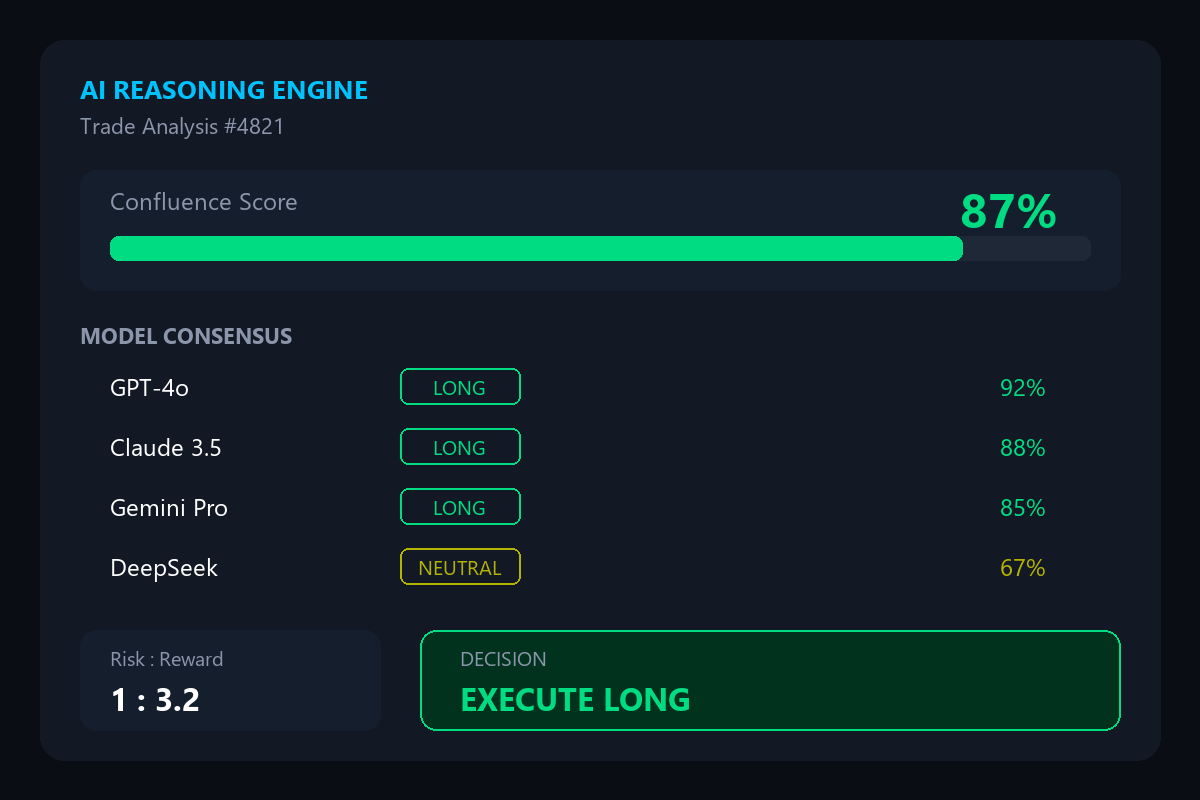

Reason with AI

Multiple LLM providers analyze confluence, assess risk-reward, and generate autonomous trade decisions with full reasoning chains.

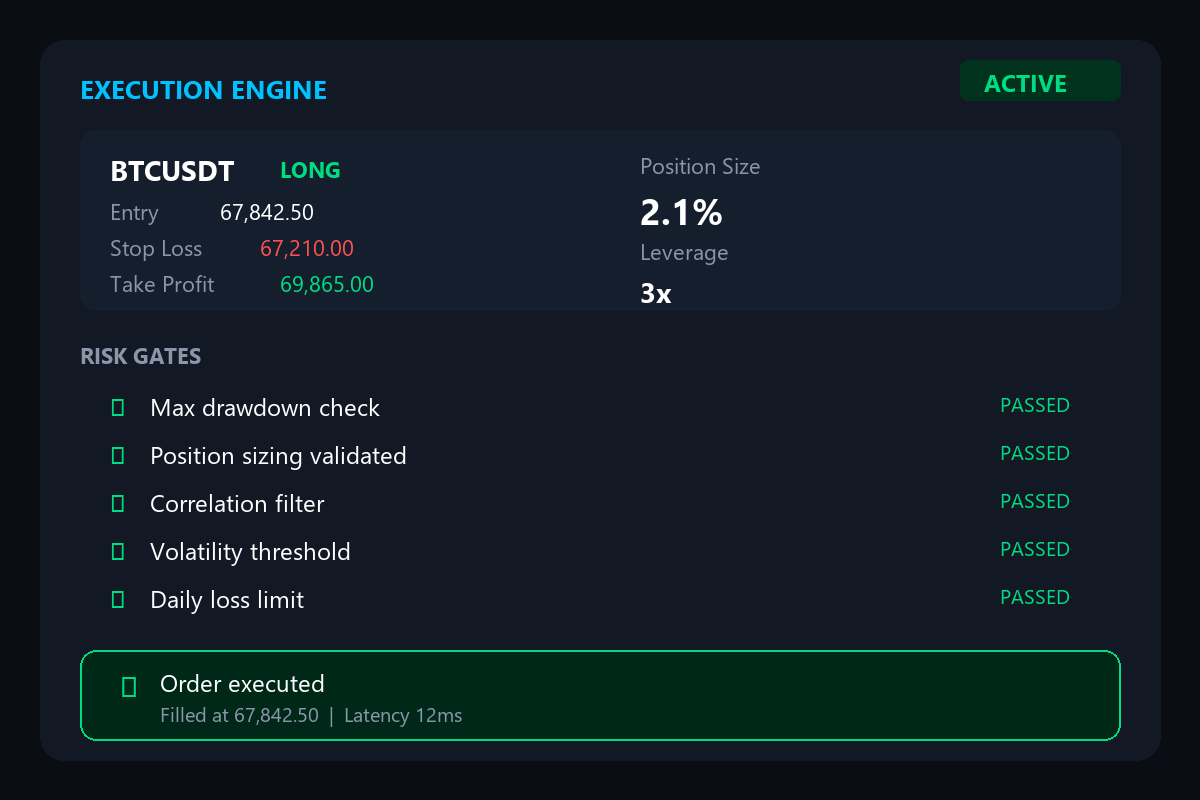

Execute with precision

Risk gates validate every decision. Position sizing, drawdown limits, and entry timing are enforced automatically before execution.